At Weather Radio Review, we are committed to providing our readers with the most accurate and comprehensive reviews of weather radios.

Our rigorous testing process ensures that each product is thoroughly evaluated for its performance, reliability, and overall value. Here’s a detailed look at how we conduct our tests:

In the interest of transparency, we want to disclose how we select weather stations and gadgets, test these devices, and accept them for testing.

Many review sites don’t test the products; they rely on customer reviews to form their opinions. We do things a bit differently.

- How We Select What to Test

- How We Select Products for Our Roundups

- How We Test

- Our New Rating Formula

- Accuracy (Or performance, as applicable) (25%)

- Affordability (25%)

- Durability (20%)

- Feature Set (15%)

- Ease of Use/Usability (15%)

How We Select What to Test

Weather stations and gadgets selected for testing meet our requirements for a standard feature set that we look for and often have standout features. We do accept devices offered for testing from manufacturers.

We do not receive monetary compensation in exchange for reviews from manufacturers. Occasionally, manufacturers might purchase advertising through our ad partners, Ezoic and Google AdSense, or us. We do not actively solicit companies to advertise on their reviews, nor do any ad purchases affect the outcomes of those reviews.

Our privacy policy includes more information on our advertising policies.

Our team begins by researching the latest weather radios on the market. We consider popular models from reputable brands, as well as new releases that show promise based on specifications and user feedback.

We aim to cover a diverse range of products to meet the needs of various users, from casual listeners to emergency preparedness enthusiasts.

How We Select Products for Our Roundups

We must occasionally include products we have not had personal experience with, as testing every device is impossible. These frequently appear in our product roundups. When these products are included, we rely on reviews on retailer websites such as Amazon. Sometimes, we may write a review based on our knowledge of a similar model.

For example, Ecowitt stations, for the most part, aren’t sold in the U.S. However, since Ambient Weather and Ecowitt use the same hardware, we can write a review with a high degree of accuracy even though the product isn’t in our hands.

Most sites mark actual customers with a “verified purchase,” the review we use to judge products for selection in a product roundup. We do not use non-verified reviews in our product selections.

How We Test

Weather station testing is far from an exact science. However, we do follow a standard method to test our stations.

All stations tested are placed on the same mount in the exact location at our testing site. Sensors are compared with analog instruments where possible, and when an analog instrument isn’t available, a nearby NOAA weather observing station is used.

The typical test lasts about 2-4 weeks, depending on the weather variability during the testing period. Select stations remain installed past the initial review period, allowing us to provide long-term reviews of these stations. For example, our Davis Vantage Vue has been continuously operating since our initial test in September 2016!

Our New Rating Formula

In the past, we have rated devices subjectively. However, we wanted to standardize our ratings to make them more objective.

The result is a new rating formula that took effect across our network on June 1, 2022. As a result, it’s become considerably more challenging to get a “five-star rating,” which should be reserved for the genuinely top-tier devices in every category.

So, how are our ratings determined? We weigh each part based on what we think is most important, with accuracy/performance and affordability making up half the rating alone. Durability is another crucial factor in a highly-rated device. Finally, we consider the feature set and ease of use before calculating the overall rating, which is the star rating you see.

Here is the current weighting:

Accuracy/Performance: 25%

Affordability: 25%

Durability: 20%

Feature set: 15%

Ease of use/usability: 15%

We will regularly review our weighting and adjust our model as necessary. Of course, if our weighting changes, we’ll let you know. However, accuracy/performance and cost are two areas in which we find most of our readers base their purchase decisions.

We plan to move to a scoring system (out of 100 points) soon, allowing us to offer a more exact rating of the products we review. You’ll see that rolled out over the summer, but we first needed to standardize our ratings so that all devices are tested and rated using the same scale.

Accuracy (Or Performance, As Applicable) (25%)

We judge devices based on their accuracy or performance. To score high, we look for highly accurate or high-performing devices. A device must score average or better to qualify for our “best of” lists.

5 – Pro-grade accuracy or performance (generally suitable for scientific or mission-critical applications).

4 – Above average

3 – Average

2 – Below average

1 – Poor accuracy or performance

Affordability (25%)

We are judged based on the average price of the devices on our recommended list. The device must have a retail price well below the average to score high here, but no minimum score is needed to be eligible for our “best of” lists.

5 – Well below the average price

4 – Below the average price

3 – Average

2 – Above the average price

1 – Well above the average price

Durability (20%)

Most devices we accept for review are highly durable and well-constructed, so devices will often score high in this category. However, a device can not score less than three stars to be included in our “best of” lists.

5 – Solid construction, appears very durable

4 – Generally good construction

3 – Average durability

2 – Below average durability, weak construction in parts

1 – Poor or deficient construction

Feature Set (15%)

Among the various types of devices we review, most devices in that category typically share a standard set of features. Having more than the basic feature set helps a device score high here.

5 – Basic features + additional standard features, expandability

4 – Basic feature set but expandable

3 – Basic Feature Set (for weather stations, must have temp, humidity, wind speed, direction, rainfall), but not expandable

2 – Some basic features are missing

1 – Minimal feature set

Ease Of Use/Usability (15%)

But how easy is it to use? We also call this a usability score.

5 – Best in class, intuitive, well-designed U.I.

4 – Easy to use with few U.I. issues

3 – Somewhat difficult to use but manageable

2 – Difficult to use

1 – Very difficult to use

The nice thing about our new rating system is that it also allows us to offer more specific ratings based on the factors we look for, which we’ve found our readers to consider when making a buying decision. We’ll add these analyses to our “best of” pages, making them even more helpful.

Performance Testing

Our performance tests are designed to evaluate each weather radio’s key features and functions. This includes:

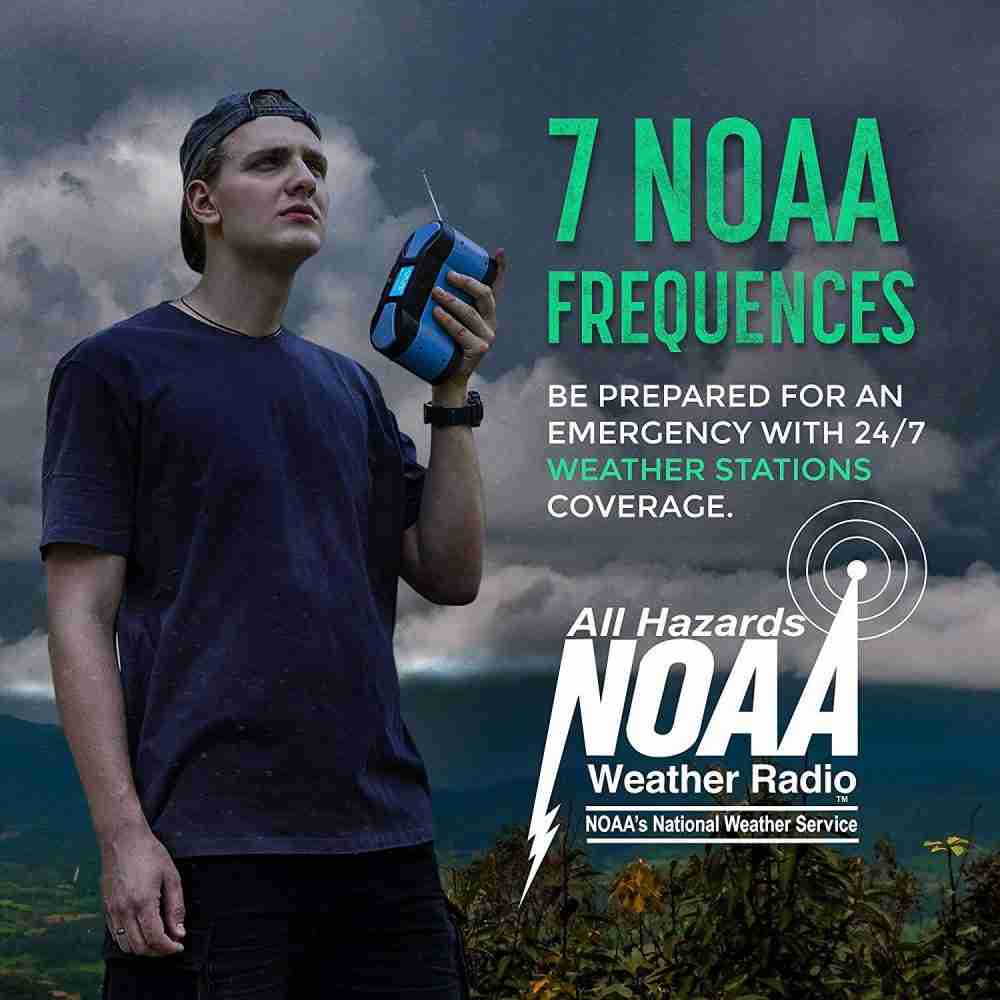

- Reception Quality: We test the radio’s ability to receive clear and consistent signals from the National Weather Service (NWS) across various locations and conditions.

- Alert System: We assess the effectiveness of the radio’s alert system and ensure it reliably activates for weather warnings and emergency alerts.

- Sound Quality: We evaluate the clarity and volume of the radio’s speaker, ensuring it is loud enough to be heard in noisy environments.

- Battery Life: We test the battery life under typical usage conditions to determine how long the radio can operate on a full charge or set of batteries.

- Additional Features: We test any additional features, such as hand crank generators, solar panels, flashlights, and USB charging capabilities.

Real-World Testing

We conduct real-world testing scenarios to ensure our reviews are practical and relevant. This involves using the radios in different environments and weather conditions, from urban areas to remote locations. We simulate emergencies to see how the radios perform when needed most.

Long-Term Use

For a comprehensive evaluation, we also consider long-term use. We monitor the radios over an extended period to identify any durability issues or performance changes that may arise with regular use.

Comparative Analysis

After testing, we compare the results with those of other models in the same category. This helps us highlight each product’s strengths and weaknesses relative to its competitors, providing a clear picture of its value.

Expert Reviews and User Feedback

In addition to our hands-on testing, we gather expert reviews and user feedback from various sources. This helps us corroborate our findings and provide a well-rounded perspective on each product.

Final Review

Our final review combines all aspects of our testing process. We provide detailed insights into each weather radio’s performance, features, and overall value. We aim to equip you with the information you need to make an informed purchasing decision.

At Weather Radio Review, we pride ourselves on our thorough and unbiased testing process. Thank you for trusting us as your go-to source for weather radio reviews.

Affiliate Disclosure

While The Weather Radio Review does not solicit or accept monetary contributions for reviews, we may sometimes be compensated for purchases made through links on our site. This may occur through a relationship with a retailer offering the product or directly from the manufacturer.

This relationship does not influence how we test or affect our opinions on a product. For more, read our affiliate link policy.

U.S. Federal Trade Commission guidelines require us to disclose this relationship, and where such links are present, we include a disclosure in a prominent location visible to the reader.

Why you can trust our recommendations

We have experience with all the products and companies we recommend here on TWSE. Our review staff includes degreed meteorologists and scientists, some of whom have owned the products they review for several years. Our staff has reviewed home weather gadgets for over a decade on TWSE and elsewhere.

How We Test

A weather station or gadget must score highly in our scoring metrics in several key areas, including accuracy, value, durability, ease of use, and feature set. We accept products for review, but we do not accept compensation in exchange for a positive review.

This “How We Test” page outlines your comprehensive and meticulous approach to product evaluation, building credibility and trust with your audience.